Elk stack install centos11/29/2023

Then install the RPM with this yum command (if you downloaded a different release, substitute the filename here): wget -no-cookies -no-check-certificate -header "Cookie: gpw_e24=http%3A%2F%2Foraclelicense=accept-securebackup-cookie" "".Following the steps in this section means that you accept the Oracle Binary License Agreement for Java SE.Ĭhange to your home directory and download the Oracle Java 8 (Update 73, the latest at the time of this writing) JDK RPM with these commands:

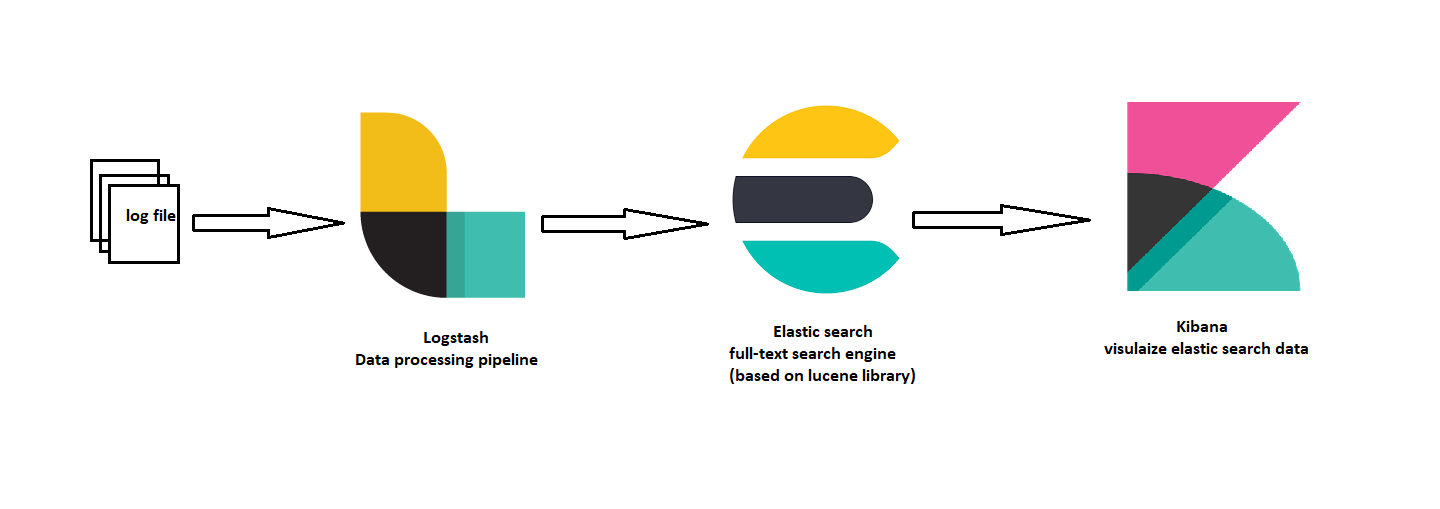

It should, however, work fine with OpenJDK, if you decide to go that route. We will install a recent version of Oracle Java 8 because that is what Elasticsearch recommends. Let’s get started on setting up our ELK Server! Install Java 8Įlasticsearch and Logstash require Java, so we will install that now. In addition to your ELK Server, you will want to have a few other servers that you will gather logs from. For this tutorial, we will be using a VPS with the following specs for our ELK Server: The amount of CPU, RAM, and storage that your ELK Server will require depends on the volume of logs that you intend to gather. If you would prefer to use Ubuntu instead, check out this tutorial: How To Install ELK on Ubuntu 14.04. Instructions to set that up can be found here (steps 3 and 4): Initial Server Setup with CentOS 7. To complete this tutorial, you will require root access to an CentOS 7 VPS. Filebeat will be installed on all of the client servers that we want to gather logs for, which we will refer to collectively as our Client Servers. We will install the first three components on a single server, which we will refer to as our ELK Server. Filebeat: Installed on client servers that will send their logs to Logstash, Filebeat serves as a log shipping agent that utilizes the lumberjack networking protocol to communicate with Logstash.Kibana: Web interface for searching and visualizing logs, which will be proxied through Nginx.Logstash: The server component of Logstash that processes incoming logs.Our ELK stack setup has four main components: The goal of the tutorial is to set up Logstash to gather syslogs of multiple servers, and set up Kibana to visualize the gathered logs.

It is possible to use Logstash to gather logs of all types, but we will limit the scope of this tutorial to syslog gathering. It is also useful because it allows you to identify issues that span multiple servers by correlating their logs during a specific time frame. Both of these tools are based on Elasticsearch, which is used for storing logs.Ĭentralized logging can be very useful when attempting to identify problems with your servers or applications, as it allows you to search through all of your logs in a single place. Kibana is a web interface that can be used to search and view the logs that Logstash has indexed. Logstash is an open source tool for collecting, parsing, and storing logs for future use. We will also show you how to configure it to gather and visualize the syslogs of your systems in a centralized location, using Filebeat 1.1.x. In this tutorial, we will go over the installation of the Elasticsearch ELK Stack on CentOS 7-that is, Elasticsearch 2.2.x, Logstash 2.2.x, and Kibana 4.4.x.

0 Comments

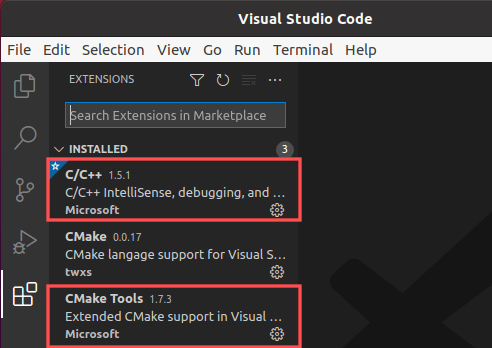

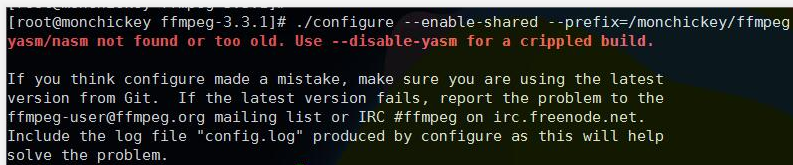

In the above example, I simply use the default paths for AppVeyor's VMs. The catch here is that when you go for MSYS Makefiles, or MinGW Makefiles for that matter, you should define your PATH variable to include the binaries to the compiler toolchain. This example successfully builds on AppVeyor with both of the toolchains. With hello.c having the contents #include ĬMakeLists.txt having the contents cmake_minimum_required(VERSION 3.10)Īnd finally, appveyor.yml having the following image: Visual Studio 2013 Below is a minimal working example based on your code.Īssume that you have the following directory structure. Instead, you should (re)define your PATH environment variable to include the corresponding (i.e., MinGW or Cygwin) binaries, and select an appropriate generator. To be able to use the locally installed gcc compiler, you do not need to play with any of the (related) variables inside your CMakeLists.txt file. Using toolchain files, you can not only define different compiler/linker setups for your host but also do cross-compilation, in which the host and target devices are of different architecture.ĮDIT.

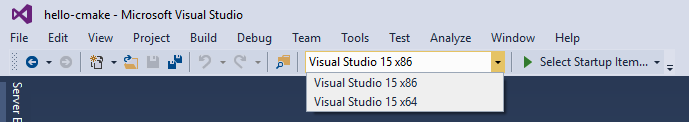

Having this in mind, please do not mess with the CMAKE_C(XX)_COMPILER variables directly in your CMakeLists.txt, but rather think of using toolchain files to define the specific compiler/linker properties. You define the dependencies and set the language requirements for your targets, and you ask CMake to generate files to help you build your targets. Name of generator.ĬMAKE_GENERATOR:INTERNAL=Visual Studio 15 2017ĬMAKE_GENERATOR_INSTANCE:INTERNAL=C:/Program Files (x86)/Microsoft Visual Studio/2017/BuildTools/ĬMake is a tool that is intended for defining dependencies among the targets in your application. It is trying to point to the correct compiler, but it is using the Visual Studio Generator still. When i look at the cache file created when using the toolchain file, i find something interesting. Set(CMAKE_FIND_ROOT_PATH_MODE_INCLUDE ONLY) Set(CMAKE_FIND_ROOT_PATH_MODE_LIBRARY ONLY) Set(CMAKE_FIND_ROOT_PATH_MODE_PROGRAM NEVER) # search headers and libraries in the target environment, search # adjust the default behaviour of the FIND_XXX() commands: SET(CMAKE_FIND_ROOT_PATH C:/MyPrograms/MinGW/bin/ ) # The target of this operating systems is I tried to make it work using the following toolchain file, but it still chooses Visual Studio build tools. But when i try to set the CMAKE_C_COMPILER variable, it seems to fail. Specifically, I want it to use the locally installed GCC compiler. I am trying to get CMAKE to use a specific compiler when running. AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed